Localising LLMs – AI innovation in low resource languages

- Details

- Category: Enterprise Ecosystems

- 240152 views

The integration of AI presents as many challenges to operators as it does opportunities. We recently explored how telcos are ensuring an ethical approach to AI in terms of corporate governance, and how they are able to demonstrate this through assurance models and tools.

However, while responsible AI adoption is critical, it must not stymie the opportunities that the technology can enable. Given the rapid proliferation of AI, operators may feel overwhelmed by sense that they should be racing to adopt AI despite the optimal use cases not yet being apparent.

The GSMA Foundry was created as a focal point for innovation the industry, with the aim of pooling collective knowledge across numerous subjects, including monetization of 5G, non-terrestrial networks and their integration into terrestrial networks, and of course AI.

Getting Started with AI

Richard Cockle, Head of the GSMA Foundry, summarises its purpose by saying: “I think the industry suffered in the past from having innovation happening all over the place, without it being able to come together and people all being able to learn across the industry as a whole.”

The GSMA Foundry has made AI a core focus in order to demonstrate the role that the technology can play in the mobile space, and to support the practical application of these technologies within operators. The GSMA represents over 750 mobile operators, and while investments in AI advancements are underway across the sector, not all of these are from multinational corporates – smaller operators don’t necessarily have the technology investment funds to develop their own AI solutions, so having the resources to invest in AI could risk becoming a new divide.

To address this, the GSMA Foundry has spearheaded several initiatives aimed at the operator community, including a library of over 50 case studies detailing applications for AI in the telco sector. While the process of gathering the proof points is still underway, Cockle noted that the case studies provide the operator community and the broader mobile ecosystem with information about how they can use AI technologies, covering everything from network efficiency, operations, customer experience and revenue growth.

Cockle noted that beyond cost savings, AI enables opportunities in the telco community both at multinational corporate level and local operator level. “A lot of companies are struggling with the implementation of AI. They know they should be doing it, but what exactly they should be doing - and how they should be driving it into the technology elements of their business - has been a stumbling block. We're hoping that this can help the smaller operators and the smaller ecosystem players take that step.”

Cost is of course an issue – AI can require a huge amount of upfront investment, and to assist with this, GSMA Foundry has partnered with IBM to launch the AI Starter Pack. Predominantly focused on Generative AI, this allows operators to receive support from IBM to get a feel for how they could use AI without any long-term commitment. “It’s about opening up a facility which enables the smaller MNOs to get started on smaller ecosystems - we currently have five MNOs that are going through that process” notes Cockle.

Large models, smaller languages

Large language models (LLMs) are one of the more well-known AI applications, but most generative AI is being created in the seven most widespread languages globally. However, Cockle noted that initiatives are underway to develop LLMs in low resource languages – both to facilitate innovation in particular markets and to preserve the nuance of local languages. The Barcelona Supercomputer Centre has created a local LLM in Catalan using resources from the local government to create a unique voice capture method to use the voices of local people to populate the LLM, allowing it to pick up nuances in the dialect to provide a more authentic, personal model.

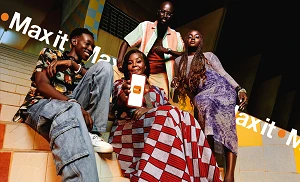

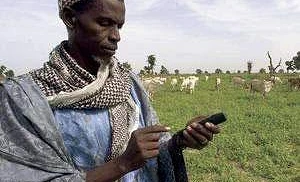

Cockle notes that the business applications of such technology are significant for operators. In the US or UK, international services are the norm as content defaults to English; however, operators in markets with lower resource languages have an opportunity to become content creators and content providers. For example, a localised music or TV service might prove more popular than its international rival, and this could be offered by the operator directly – a particularly appealing prospect for operators in emerging markets. Africa is the most linguistically diverse continent on earth, with nearly 2000 languages spoken – of which only around 75 are spoken by over one million speakers, with over 300 African languages classified as endangered. LLMs could represent a lifeline for preserving languages – particularly given that many are almost exclusively oral, with little written tradition.

Cockle’s team pitched the low resource language LLM concept to a strategy group and found significant interest among operators in emerging markets. In particular, Veon was keen to develop LLMs for its various operations. Alexey Sharavar, CEO of VEON subsidiary QazCode, discussed how Beeline Kazakhstan is creating a local Kazakh language model with the support of the Barcelona Supercomputer Centre. Around 70% of Kazakhstan’s population speaks Kazakh, and there is a growing interest in local languages, which motivated QazCode to create a local LLM, but within a year and a half they had achieved around two billion tokens – insufficient for this purpose.

Sharavar explained that the breakthrough moment came when the GSMA connected QazCode with the Barcelona Supercomputer Centre. This provided the necessary inspiration– Catalan is a smaller language than Kazakh, and so by sharing their knowledge base QazCode was able to adjust how to train their models. This led to collaborations with the Kazakh government and major universities, with the 70B Kazakh language model now in the pilot phase – something which Sharavar described as being unbelievable just one year ago.

The new model has provided the opportunity to create. “The beauty of Gen AI and LLMs is that you can create new products, and we see that major interest, it's in education, healthcare products, recommendation systems with Gen AI”, said Sharavar. He noted that LLMs would enable new services for customers, for example teaching Kazakh language and history, which could incentivise government investment, but added that on the telco side, the opportunity to bring down customer service costs would be a key factor in driving adoption.

Cockle agreed that the capability to provide enterprise solutions in a local language would provide operators with the opportunity to position themselves as a supporter at national level, to try and keep low resource languages alive. “That's why we're so excited about this - it's all about keeping those local societies alive, and also the local economy, because then you can have a local personalised service in a particular country that may not be served by that particular those seven languages that we're seeing in the mass market alone.”