Enabling Ethical AI Adoption

- Details

- Category: Enterprise Ecosystems

- 240510 views

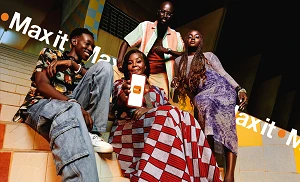

With around 75% of operators now launching Generative AI commercially, integrated AI strategies are increasingly essential for facilitating the interactions between AI and networks. Societal concerns around AI adoption put pressure on businesses to demonstrate an ethical approach in implementing this technology.

At a recent roundtable discussion titled 'AI 2025 – Shaping tomorrow’s telecoms landscape', experts from GSMA Intelligence as well as operators including Telefonica shared their insights into the responsible adoption of AI.

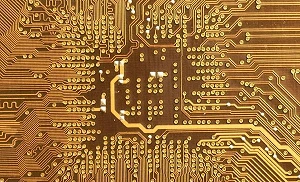

Peter Jarich, Head of GSMA Intelligence, opened the conversation with an overview of how the AI space has shifted in the past year and will continue to evolve in 2025 as the technology is rapidly adopted. He underlined that around two-thirds of operators have adopted an integrated AI strategy, saying: “AI is incredible for improving the way networks operate, for healthy operational efficiency, for improving what operators do with their networks, but to fully leverage AI to roll out those use cases that we want, we need networks that are capable of doing that.”

Jarich noted that increased AI use across networks would drive upstream traffic – traditionally, mobile networks are built to support substantially more downstream traffic, so this increased AI workload will affect how networks are designed and built. Operators are primarily using AI in their network operations, often for automating processes and troubleshooting as well as on the security side. Supporting Gen AI usage will involve additional investment in capacity to drive new traffic.

There are of course many questions over how operators will make money from AI; looking internally towards network operations is perhaps less risky than an external focus on the customer facing side, but ultimately operators need to grow their businesses by generating revenue. Jarich noted that there is a major trend towards collaboration with major cloud players such as Google and Microsoft to leverage the innovation taking place across the ecosystem.

Security and Ethics

Jarich argued that in terms of security, there is a dual nature with AI: for years, operators have been using AI to help them understand the threat landscape and guard against it, but increasingly AI is being used to generate these threats – it will drive more fraud on networks. Around 90% of operators name security as their top strategic priority.

Zeroing in on a key theme, Jarich stated that responsible AI was not merely being paid lip service. Much like sustainability before it, ethical use of AI has emerged as a core pillar of business that companies understand the importance and value of pursuing. Much as commitments to Net Zero were evident, Jarich said the same trend was clear with responsible AI, with over 70% of operators having an AI governance framework in place. Jarich acknowledged that certain markets were of course taking the lead on AI governance, but noted that the requirement is recognised globally.

Ethics in AI are of course crucial, but sustainability is equally essential. The mobile sector has led other industries in terms of net zero commitments, and while AI can help to drive improved efficiencies for networks, the increased energy usage to support AI workloads could more than offset this. Jarich said that it was important to consider these aspects together: 75% of an operator’s energy usage comes from their radio access network, so using AI sustainability solutions to optimise RAN efficiency makes complete sense and helps counter the increased energy consumption of AI.

Adopting AI Responsibly

Alix Jagueneau, Head of External Affairs at the GSMA, took the floor to discuss the GSMA’s Responsible AI Initiative, a partnership with McKinsey to assess the opportunity behind responsible AI. Over the next 15 to 20 years, the AI opportunity for telco is estimated at US$680 billion, but to capitalise on this, Jagueneau said it was crucial to link innovation with responsible deployment and ethics. She argued that since the telecoms industry is heavily regulated and highly reliant on trust, it makes better business sense to adopt AI responsibly. With 65% of operators adopting an AI strategy for their business, internal governance becomes a key focus, with organisations establishing champions to ensure governance is in place, anticipate potential issues, and manage their reputation.

In September, the GSMA launched the Responsible AI Maturity Roadmap, a voluntary common framework for the private sector to define a consistent way for operators to demonstrate that they are applying AI in a responsible manner. With reputation management so important for companies, the roadmap helps operators show their commitment to responsible AI and be seen as a leader in this area – particularly at a time when governance policymakers are focusing on AI governance. In Europe, the EU AI Act has been in place for the past few years, but during this same timeframe, governments globally have sought assurances that the private sector is deploying AI responsibly.

The GSMA works with operators across the globe, and they are not all at the same stage of deployment and adoption of AI: some are leaders, while others will take time to embrace AI and transform their business. Jagueneau noted that regardless of an operator’s AI maturity level, the roadmap helps to plan business strategies and offers best practice guidance for operators to implement AI in a responsible manner, whatever their ambitions. As more operators commit to the tool and use it, the GSMA can compile more resources and content to share learning across the industry.

Jagueneau described the RAI maturity roadmap as “a way to help in internal conversation across the business, to have these discussions before you roll out an AI tool services to think about different checkpoints. It's about being visionary as well - it's not just for the sake of compliance. If you think about responsible AI, from a compliance perspective, it's more to be ahead of the game, and not playing catch up.”

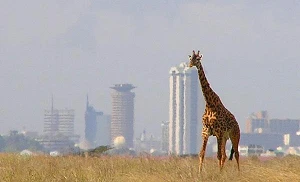

Around 22 mobile operators have adopted the RAI maturity roadmap, from a wide range of geographies – not just developed markets, but operators in Africa and Asia-Pacific as well. While the roadmap has been developed with insight from telcos, Jagueneau noted that it is industry-agnostic, and has the potential to be replicated in other areas.

AI Ethics in Corporate Governance

Telefonica’s Head of AI Ethics Joaquina Salado went into further detail about Telefonica’s adoption of the RAI maturity roadmap. In 2017, Telefonica established ethical principles to set its ambitions on working with AI, adding that the firm has recently updated these principles to reinforce elements – for example on sustainability. Telefonica’s AI governance model includes roles, responsibilities and processes to ensure that it reaches the entire organisation - this has become particularly important with the rapid adoption of Gen AI across the company.

As part of its adoption of the RAI maturity roadmap, Telefonica has created the role of responsible AI champions across its organisation, initially in the areas working directly with AI but now almost everywhere following the proliferation of Gen AI. Salado noted that this gives the operator the opportunity to instil an ethical mindset as it enacts cultural change within the company – and that this helps in retaining desirable talent, as increasingly workers are attracted to companies with strong CSR values.

“Together with this governance model, we work a lot in creating awareness, communicating, and training our employees. We have responsible AI integrated completely into the AI strategy, both from the business side, but also on training - whenever we are training our employees on AI, responsible AI is a key part of it.”

Telefonica also uses an internal group of experts with different specialities to escalate use cases that might be that might have more ethical components or be more high risk now that the AI act is in place. Salado noted that the group is mapping the regulation: “in the case of Europe, we have the requirements from the AI Act map in our workflow, where we are analysing and doing the risk assessment of our use cases, and it is also ready to implement other regulations that might come out in the other geographies where we are present, like in Latin America.”

Salado cited Verify, a tool that Telefonica has developed, as an example of Responsible AI combining business opportunities with societal benefits. Verify can identify text, video and images that have been created by AI, helping companies and individuals identify and fight against misinformation. Taking this responsible approach helps to increase trust among customers as well as society at large, as well as mitigating risks from the outset.